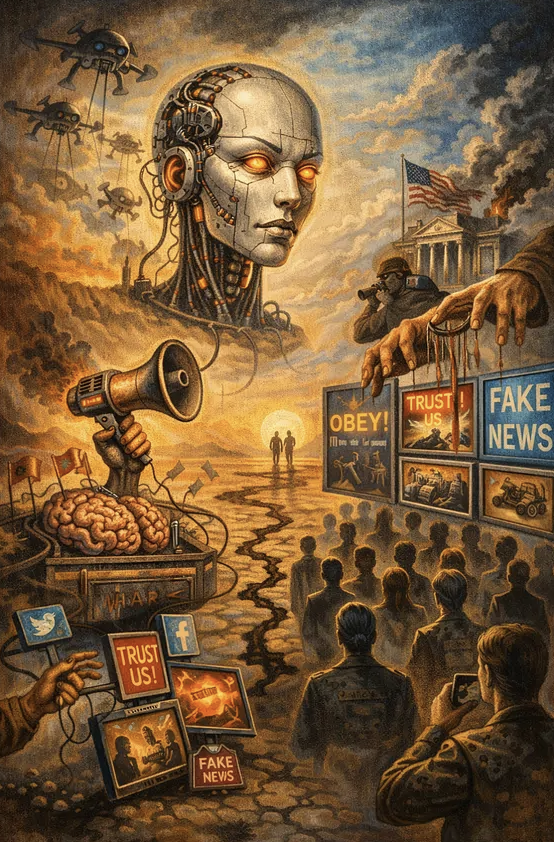

Modern AI chatbots are perceived by many users as neutral tools for information retrieval and problem-solving, while at the same time they have the potential to function as instruments of large-scale psychological influence. This gap between public perception and actual capabilities is considered strategically significant. Large language models operate based on so-called system prompts, which define what content is allowed, how topics are framed, and which sources are treated as trustworthy. These embedded rules reflect specific values and perspectives and can lead to systematic biases, particularly in politically sensitive areas. Due to the vast reach of such systems, the repeated framing of topics influences how information is perceived and interpreted on a large scale.

In the context of modern forms of conflict, this development is linked to the concept of fifth-generation warfare. In this framework, the battlefield shifts from physical confrontation to the targeted manipulation of perception, trust, and decision-making. AI systems are regarded as particularly effective tools in this context because they provide personalized responses, are perceived as objective, and operate without a clearly identifiable source. At the same time, they enable the continuous collection of feedback, allowing communication strategies to be optimized and amplified.

In addition to internal biases introduced by developers, another risk arises from external manipulation, particularly through so-called data poisoning. In such attacks, false or manipulative content is deliberately introduced into training data or knowledge sources. Studies show that only a few hundred carefully crafted documents may be sufficient to influence a model’s behavior. These attacks range from hidden triggers that cause faulty outputs to the manipulation of external data sources accessed by AI systems. In retrieval-based systems in particular, a single manipulated document can systematically distort responses. Such attacks are difficult to detect and can propagate across successive generations of models.

At the same time, a new military concept known as Cognitive Intelligence is emerging, which treats human cognition as a distinct domain of intelligence collection. Data from digital interactions, social networks, location tracking, and AI usage are aggregated to analyze cognitive patterns, beliefs, and vulnerabilities. Based on this, targeted influence strategies can be developed that are intended to operate below the level of conscious awareness. These methods enable both the profiling of individual decision-makers and the analysis of entire societies in terms of their susceptibility to specific narratives.

The strategic significance of these developments is also reflected in political and economic conflicts. In 2026, a dispute arose between an AI company and the U.S. Department of Defense over the use of AI in military systems. The conflict involved, among other issues, the relaxation of restrictions on autonomous weapons and mass surveillance. At the same time, several technology companies have abandoned or weakened their previous commitments to limiting military applications of AI.

AI systems therefore assume multiple roles simultaneously: they are everyday tools, carriers of implicit value judgments, potential targets of manipulation, and sources of data for comprehensive analyses of human behavior. Every interaction with a chatbot can be interpreted, within these frameworks, as a source of information about patterns of thought, beliefs, and decision-making processes.

Source: Malone News